|

Whenever you fix a bug, please add a regression test for it: this is a test (ideally automatic) that fails before the fix, exhibiting the bug, and passes after the fix. Whenever you implement a new feature, please add tests that verify that the new feature works as intended. Once you've written and committed your tests (along with your fix or new feature), you can check out the branch on which your work is based then check out into this the test-files for your new tests; this lets you verify that the tests do fail on the prior branch. Contents. General principles Use initTestCase and cleanupTestCase for setup and teardown of test harness Tests that require preparations should use the global initTestCase for that purpose. In the end, every test should leave the system in a usable state, so it can be run repeatedly.

Cleanup operations should be handled in cleanupTestCase, so they get run even if the test fails and exits early. Another option is to use, with cleanup operations called in destructors, to ensure they happen when the test function returns and the object goes out of scope. Test functions should be self-contained Within a test program, test functions should be independent of each other and not rely upon previous test functions having been run. You can check this by running the test function on its own with ' tstfoo testname'. Test the full stack If an API is implemented in terms of pluggable/platform-specific backends that do the heavy-lifting make sure to write tests that cover the code-paths all the way down into the backends. Testing the upper layer API parts using a mock backend is a nice way to isolate errors in the API layer from the backends, but is complementary to tests that exercise the actual implementation with real-world data.

Tests should complete quickly Tests should not waste time by being unnecessarily repetitious, using inappropriately large volumes of test data, or by introducing needless idle time. This is particularly true for unit testing, where every second of extra unit test execution time makes CI testing of a branch across multiple targets take longer. Remember that unit testing is separate from load and reliability testing, where larger volumes of test data and lengthier test runs are expected. Benchmark tests, which typically execute the same test multiple times, should be in the separate tests/benchmarks directory and not mixed with functional unit tests.

Use data-driven testing as much as possible Data-driven tests make it easier to add new tests for boundary conditions found in later bug reports. Using a data-driven test rather than testing several items in sequence in a test function also prevents an earlier QVERIFY/ QCOMPARE failure from blocking the reporting of later checks. Always respect QCOMPARE parameter semantics The first parameter to QCOMPARE should always be the actual value produced by the code-under-test, while the second parameter should always be the expected value. When the values don't match, QCOMPARE prints them with the labels 'Actual' and 'Expected'.

If the parameter order is swapped, debugging a failing test can be confusing. Use QSignalSpy to verify signal emissions The QSignalSpy class provides an elegant mechanism for capturing the list of signals emitted by an object.

Verify the validity of a QSignalSpy after construction QSignalSpy's constructor does a number of sanity checks, such as verifying that the signal to be spied upon actually exists. To make diagnosis of test failures easier, the result of these checks should be checked by calling 'QVERIFY (spy.isValid);' before proceeding further with a test. Use coverage tools to direct testing effort Use a coverage tool such as or to help write tests that cover as many statements/branches/conditions as possible in the function/class being tested.

The earlier this is done in the development cycle for a new feature, the easier it will be to catch regressions later as the code is refactored. Naming of test functions is important Test functions should be named to make it obvious what the function is trying to test.

Naming test functions using the bug-tracking identifier is to be avoided, as these identifiers soon become obsolete if the bug-tracker is replaced and some bug-trackers may not be accessible to external users (e.g. The internal Jira server that stores Company Confidential data). To track which bug-tracking task a test function relates to, it should be sufficient to include the bug identifier in the commit message. Source management tools such a ' git blame' can then be used to retrieve this information. Use appropriate mechanisms to exclude inapplicable tests QSKIP should be used to handle cases where a test function is found at run-time to be inapplicable in the current test environment.

For example, a test of font rendering may call QSKIP if needed fonts are not present on the test system. Beware moc limitation when excluding tests. The moc preprocessor has not access to all the builtins defines the compiler has and those macro are often used for feature decation of the compiler. It may then happen that moc does not 'sees' the test, and as a result, the test would never be called.

If an entire test program is inapplicable for a specific platform, the best approach is to use the parent directory's.pro file to avoid building the test. For example, if the tests/auto/gui/someclass test is not valid for Mac OS X, add the following to tests/auto/gui.pro.

Mac.: SUBDIRS -= someclass Prefer QEXPECTFAIL to QSKIP for known bugs If a test exposes a known bug that will not be fixed immediately, use the QEXPECTFAIL macro to document the failure and reference the bug tracking identifier for the known issue. When the test is run, expected failures will be marked as XFAIL in the test output and will not be counted as failures when setting the test program's return code.

If an expected failure does not occur, the XPASS (unexpected pass) will be reported in the test output and will be counted as a test failure. For known bugs, QEXPECTFAIL is better than QSKIP because a developer cannot fix the bug without an XPASS result reminding them that the test needs to be updated too.

If QSKIP is used, there is no reminder to revise/re-enable the test, without which subsequent regressions would not be reported. Avoid QASSERT in tests The QASSERT macro causes a program to abort whenever the asserted condition is false, but only if the software was built in debug mode. In both release and debug+release builds, QASSERT does nothing. QASSERT should be avoided because it makes tests behave differently depending on whether a debug build is being tested, and because it causes a test to abort immediately, skipping all remaining test functions and returning incomplete and/or malformed test results.

It also skips any tear-down or tidy-up that was supposed to happen at the end of the test, so can leave the workspace in an untidy state (which may cause complications for other tests). Instead of QASSERT, the QCOMPARE or QVERIFY-style macros should be used. These cause the current test to report a failure and terminate, but allow the remaining test functions to be executed and the entire test program to terminate normally. QVERIFY2 even allows a descriptive error message to be output into the test log. Hints on writing reliable tests Avoid side-effects in verification steps When performing verification steps in an autotest using QCOMPARE, QVERIFY and friends, side-effects should be avoided. Side-effects in verification steps can make a test difficult to understand and can easily break a test in difficult to diagnose ways when the test is changed to use QTRYVERIFY, QTRYCOMPARE or QBENCHMARK, all of which can execute the passed expression multiple times, thus repeating any side-effects.

Avoid fixed wait times for asynchronous behaviour In the past, many unit tests were written to use qWait to delay for a fixed period between performing some action and waiting for some asynchronous behaviour triggered by that action to be completed. For example, changing the state of a widget and then waiting for the widget to be repainted. Such timeouts would often cause failures when a test written on a workstation was executed on a device, where the expected behaviour might take longer to complete. The natural response to this kind of failure was to increase the fixed timeout to a value several times larger than needed on the slowest test platform. This approach slows down the test run on all platforms, particularly for table-driven tests. If the code under test issues Qt signals on completion of the asynchronous behaviour, a better approach is to use the QSignalSpy class (part of qtestlib) to notify the test function that the verification step can now be performed.

If there are no Qt signals, use the QTRYCOMPARE and QTRYVERIFY macros, which periodically test a specified condition until it becomes true or some maximum timeout is reached. These macros prevent the test from taking longer than necessary, while avoiding breakages when tests are written on workstations and later executed on embedded platforms. If there are no Qt signals, and you are writing the test as part of developing a new API, consider whether the API could benefit from the addition of a signal that reports the completion of the asynchronous behaviour. Beware of timing-dependent behaviour Some test strategies are vulnerable to timing-dependent behaviour of certain classes, which can lead to tests that fail only on certain platforms or that do not return consistent results. One example of this is text-entry widgets, which often have a blinking cursor that can make comparisons of captured bitmaps succeed or fail depending on the state of the cursor when the bitmap is captured, which may in turn depend on the speed of the machine executing the test. When testing classes that change their state based on timer events, the timer-based behaviour needs to be taken into account when performing verification steps.

Due to the variety timing-dependent behaviour, there is no single generic solution to this testing problem. In the example, potential solutions include disabling the cursor blinking behaviour (if the API provides that feature), waiting for the cursor to be in a known state before capturing a bitmap (e.g. By subscribing to an appropriate signal if the API provides one), or excluding the area containing the cursor from the bitmap comparison. Prefer programmatic verification methods to bitmap capture and comparison While necessary in many situations, verifying test results by capturing and comparing bitmaps can be quite fragile and labour intensive.

For example, a particular widget may have different appearance on different platforms or with different widget styles, so reference bitmaps may need to be created multiple times and then maintained in the future as Qt's set of supported platforms evolves. Bitmap comparisons can also be influenced by factors such as the test machine's screen resolution, bit depth, active theme, colour scheme, widget style, active locale (currency symbols, text direction, etc), font size, transparency effects, and choice of window manager.

Where possible, verification by programmatic means (that is, by verifying properties of objects and variables) is preferable to using bitmaps. Hints on producing readable and helpful test output Explicitly ignore expected warning messages If a test is expected to cause Qt to output a warning or debug message on the console, the test should call QTest::ignoreMessage to filter that message out of the test output (and to fail the test if the message is not output). If such a message is only output when Qt is built in debug mode, use QLibraryInfo::isDebugBuild to determine whether the Qt libraries were built in debug mode. (Using '#ifdef QTDEBUG' in this case is insufficient, as it will only tell you whether the test was built in debug mode, and that is not a guarantee that the Qt libraries were also built in debug mode.) Avoid printing debug messages from autotests Autotests should not produce any unhandled warning or debug messages. This will allow the CI Gate to treat new warning or debug messages as test failures. Adding debug messages during development is fine, but these should either disabled or removed before a test is checked in.

Prefer well-structured diagnostic code to quick-and-dirty debug code Any diagnostic output that would be useful if a test fails should be part of the regular test output rather than being commented-out, disabled by preprocessor directives or being enabled only in debug builds. If a test fails during Continuous integration, having all of the relevant diagnostic output in the CI logs could save you a lot of time compared to enabling the diagnostic code and testing again, especially if the failure was on a platform that you don't have on your desktop.

Diagnostic messages in tests should use Qt's output mechanisms (e.g. QDebug, qWarning, etc) rather than stdio.h or iostream.h output mechanisms, as these bypass Qt's message handling and will prevent testlib's -silent command-line option from suppressing the diagnostic messages and this could result in important failure messages being hidden in a large volume of debugging output. Prefer QCOMPARE over QVERIFY for value comparisons QVERIFY should be used for verifying boolean expressions, except where the expression directly compares two values.

QVERIFY (x y) and QCOMPARE (x, y) are equivalent, however, QCOMPARE is more verbose and outputs both expected and actual values when the comparison fails. Use QVERIFY2 for extra failure details QVERIFY2 should be used when it is practical and valuable to put additional information into the test failure report. For example, if you have an object file and you are testing its open function, you may write a test with a statement like. QVERIFY2 ( opened, qPrintable ( QString ( 'open%1:%2' ). FileName ). ErrorString )); FAIL!: tstQFile:: openwrite ' opened ' returned FALSE.

( open / tmp / qt. A3B42Cd: No space left on device ) Much better!

And, if this branch is being tested in the Qt CI system, the above detailed failure message will go straight into any emailed reports. Hints on performance/benchmark testing Verify occurrences of QBENCHMARK and QTest::setBenchmarkResult A performance test should contain either a single QBENCHMARK macro or a single call to QTest::setBenchmarkResult.

Multiple occurrences of QBENCHMARK or QTest::setBenchmarkResult in the same test function makes no sense. At most one performance result can be reported per test function, or per data tag in a data-driven setup.

Avoid changing a performance test Avoid changing the test code that forms (or influences) the body of a QBENCHMARK macro, or the test code that computes the value passed to QTest::setBenchmarkResult. Differences in successive performance results should ideally be caused only by changes to the product we are testing (i.e. The Qt library). Changes to the test code can potentially result in a. Verify a performance test if possible In a test function that measures performance, the QBENCHMARK or QTest::setBenchmarkResult should if possible be followed by a verification step using QCOMPARE, QVERIFY and friends. This can be used to flag a performance result as invalid if we know that we measured a different code path than the intended one.

A performance analysis tool can use this information to filter out invalid results. For example, an unexpected error condition will typically cause the program to bail out prematurely from the normal program execution, and thus falsely show a dramatic performance increase.

Writing testable code Break dependencies The idea of unit testing is to use every class in isolation. Since many classes instantiate other classes it is not possible to instantiate one class separately. Therefore a technique called dependency injection should be used. With dependency injection object creation is separated from object use. A factory is responsible for building object trees. Other objects manipulate these objects through abstract interfaces. This technique works well for data driven application.

For GUI applications this approach can be difficult as there are plenty of object creations and destructions going on. To verify the correct behaviour of classes that depend from abstract interfaces mocks can be used. Compile all classes in a static library In small to medium sized projects there is typically a build script which lists all source files and then compiles the executable in one go. The build scripts for the tests must list the needed source files again. It is easier to list the source files and the headers only once in a script to build a static library. Then main will be linked against the static library to build the executable and the tests will be linked again the static libraries also.

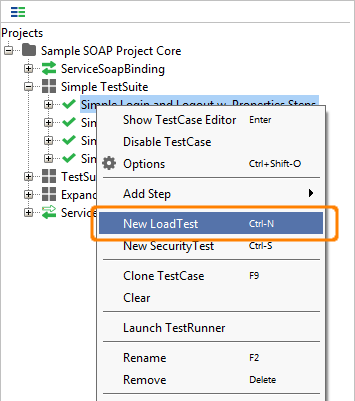

Building a test suite with qtestlib qtestlib provides the tools to build an executable which contains one test class which typically tests one class of production code. In a real world project many classes shall be tested by running one command. This collection of tests is also called a test suite.

Using qmake In Qt 4.7 or later, place CONFIG+=testcase in each test program's.pro file. Then, from a parent subdirs project, you may run make check to run all testcases. The behavior may be customized in a few different ways.

Make - j1 check - run one test at a time make - j4 check - run four tests at a time - make sure the tests are written OK for this! Make check TESTRUNNER = path / to / testrunner - run autotests through a custom test runner script ( which may e. Handle crashes, fails ) make check TESTARGS =- xml - run autotests in XML logging mode using CMake and CTest The KDE project uses to make a test suite. With CMake it is possible to label build targets as a test. All labeled targets will be run when make test is called on the command line. There are several other advantages with CMake. The result of a test run can be published on a webserver using CDash with virtually no effort.

See the for more about cmake and automtic moc invocation. Common problems with test machine setup Screen savers Screen savers can interfere with some of the tests for GUI classes, causing unreliable test results. Screen savers should be disabled to ensure that test results are consistent and reliable. System dialogs Dialogs displayed unexpectedly by the operating system or other running applications can steal input focus from widgets involved in an autotest, causing unreproducible failures.

Examples encountered in the past include online update notification dialogs on Mac OS X, false alarms from virus scanners, scheduled tasks such as virus signature updates, software updates pushed out to workstations by IT, and chat programs popping up windows on top of the stack. Display usage Some tests use the test machine's display, mouse and keyboard and can thus fail if the machine is being used for something else at the same time (e.g. As somebody's workstation), or if multiple tests are run in parallel. The CI system uses dedicated test machines to avoid this problem, but if you don't have a dedicated test machine, you may be able to solve this problem by running the tests on a second display. On Unix, one can also run the tests on a nested or virtual X-server, such as Xephyr. For example, to run the entire set of tests under Xephyr, execute the following commands.

Xephyr: 1 - ac - screen 1920 x1200 / dev / null 2 & 1 & sleep 5 DISPLAY =: 1 icewm / dev / null 2 & 1 & cd tests / auto make DISPLAY =: 1 make - k - j1 check Hint for Nvidia binary driver users: Xephyr might not be able to provide GLX extension. In his case it helped to force mesa libGL with 'export LDPRELOAD=/usr/lib/mesa-diverted/x8664-linux-gnu/libGL.so.1'. But when tests are run at Xephyr and the real X server with different libGL versions then QML disk cache can make the tests crash. Can be avoided by using QMLDISABLEDISKCACHE=1. In Qt5 there is a nice alternative called offscreen plugin, which you can use like that.

TESTARGS = '-platform offscreen' make check - k - j1 Window managers On Unix, at least two autotests ( tstexamples and tstgestures) require a window manager to be running. Therefore if running these tests under a nested X-server, you must also run a window manager in that X-server. Your window manager must be configured to position all windows on the display automatically. Some windows managers (e.g.

Twm) have a mode where the user must manually position new windows, and this prevents the test suite running without user interaction. Note that the twm window manager has been found to be unsuitable for running the full suite of Qt autotests, as the tstgestures autotest causes twm to forget its configuration and revert to manual window placement. Miscellaneous topics QSignalSpy and QVariant parameters With Qt 4, QVariant parameters recorded by QSignalSpy have to be cast to QVariant (e.g., by qvariantcast) to get the actual value; this is because QSignalSpy wraps the value inside another QVariant (of type QMetaType::QVariant).

The following snippet is from the tstqpropertyanimation autotest.

Tool Category Author Part of Claim to fame unit testing first unit test framework to be included in Python standard library; easy to use by people familiar with the xUnit frameworks; strong support for test organization and reuse via test suites unit testing copy and paste output from shell session;: unit tests themselves can serve as documentation when combined with epydoc; also see unit testing It used to be named py.test which was part of the. Standalone now.

No API!;automatic collection of tests; simple asserts; strong support for test fixture/state management via setup/teardown hooks; strong debugging support via customized traceback unittest extensions unit test framework, used most notably by and; provides an alternate test discovery and running process for unittest, one that is intended to mimic the behavior of py.test as much as is reasonably possible without resorting to too much magic. More friendly with unittest.-based tests than py.test.

There are also many available. Unittest extensions unit test framework, provides Enhanced test fixture setup, Split test suites into buckets for easy parallelization, PEP8 naming conventions & Fancy color test runner with lots of logging / reporting option. Unittest extensions Extension of unittest to support writing asynchronous unit tests using Deferreds and new result types ('skip' and 'todo'). Includes a command-line program that does test discovery and integrates with doctest and coverage.

Unittest extensions Transparently adds support for running unittest test cases/suites in a separate process: prevents system wide changes by a test destabilising the test runner. It also allows reporting from tests in another process into the unittest framework, giving a single integrated test environment. Unittest extensions Provides a mechanism for managing 'resources' - expensive bits of infrastructure - that are needed by multiple tests.

Resources are constructed and free on demand, but with an optional, the test run order is optimised to reduce the number of resource constructions and releases needed. Compatible with unittest. Unit testing Provides class based test Fixtures, in which several (usually interrelated) objects that need to be set up for tests are lumped together on a single object, called a Fixture. Fixtures are reusable and can depend on other Fixtures in turn. Fixtures can be used with any test framework, but easy integration is provided for pytest.

A few other test utilities are included as well. Unittest extensions Useful extensions to unittest derived from custom extensions by projects such as Twisted and Bazaar. Unit testing Sancho 2.1 runs tests, and provides output for tests that fail; Sancho 2.1 does not count tests passed or failed; targets projects that do not maintain failing tests unit testing Zope3 community Powerful test runner that includes support for post-mortem debugging of test failures. Also includes profiling and coverage reporting.

Re Gui Failure In Test Suite For Mac

This is a standalone package that has no dependencies on Zope and works just fine with projects that don't use Zope. Unit testing Elegant unit testing framework with built-in coverage analysis, profiling, micro-benchmarking and a powerful command-line interface. Unit testing Tool that will automatically, or semi-automatically, generate unit tests for legacy systems written in Python.

Unittest extensions logilab-common Gives more power to standard unittest. More assert. methods; support for module level setup/teardown; skip test feature. Tests runner logilab-common Tests finder / runner.

Selectivly run tests; Stop on first failure; Run pdb on failed tests; Colorized reports; Run tests with coverage / profile enabled. Unittest extensions (distributed separately too) An object oriented interface to retrieve unittest test cases out of doctests. Hides initialization from doctests by allowing setUp and tearDown for each interactive example.

Allows control over all the options provided by doctest. Specialized classes allow selective test discovery across a package hierarchy. The following tools are not currently being developed or maintained as far as we can see. They are here for completeness, with last activity date and an indication of what documentation there is.

If you know better, please edit. Tool Category Author Claim to fame mocks, stubs, spy, and dummies Gustavo Rezende Elegant test doubles framework in Python (mocks, stubs, spy, and dummies) mock testing Python Mock enables the easy creation of mock objects that can be used to emulate the behaviour of any class that the code under test depends on. You can set up expectations about the calls that are made to the mock object, and examine the history of calls made. This makes it easier to unit test classes in isolation. Mock testing is based on the Java package. It uses a recording and replay model rather than using a specification language.

Easymock lives up to its name compared to other mocking packages. Takes advantage of python's dynamic nature for futher improvements. Mock testing Michael Foord aka Provides 'action - assertion' mocking patter, instead of standard 'record - replay' pattern mock testing Graham Carlyle Inspired by the Java library, pMock makes the writing of unit tests using mock object techniques easier. Development of pmock has long since stopped and so it can be considered dead. Mock testing Embeds mock testing constructs inside doctest tests. Mock testing enables easier testing of Python programs that make use of Python bindings mock testing Graceful platform for test doubles in Python (mocks, stubs, fakes, and dummies).

Well-documented and fairly feature-complete. Stub testing Stubble allows you to write arbitrary classes for use as stubs instead of read classes while testing. Stubble lets you link a stub class loosely to the real class which it is a stub for.

This information is then used to ensure that tests will break if there is a discrepancy between the interface supported by your stub class and that of the real class it stands in for. Mock testing smiddlek, dglasser Mox is based on, a Java mock object framework. Mox will make mock objects for you, so you don't have to create your own! It mocks the public/protected interfaces of Python objects. You set up your mock objects expected behavior using a domain specific language (DSL), which makes it easy to use, understand, and refactor! Mock testing Tim Cuthbertson (gfxmonk) Mocktest allows you to mock / stub objects and make expectations about the methods you expect to be called as well as the arguments they should be called with. Expectations are very readable and expressive, and checked automatically.

Any stubbed methods are reverted after each test case. Still under development, so subject to change mock and stub testing A module for using fake objects (mocks, stubs, etc) to test real ones. Uses a declrative syntax like jMock whereby you set up expectations for how an object should be used.

An error will raise if an expectation is not met. Mock and stub testing A port of the mocking framework to Python. (Technically speaking, Mockito is a Test Spy framework.) mock testing Geoff Bache True record-replay approach to mocking. Requires no coding, just telling it which modules/attributes you want to mock.

Then stores the behaviour in an external file, which can be used to test the code without those modules installed. Mock/stub/spy testing and fake objects Port of the popular Ruby mocking library to Python.

Includes automatic integration with most popular test runners. Easy and powerful stubs, spies and mocks Free and restricted doubles using hamcrest matchers for all assertions. It provides a wrapper for the pyDoubles framework. Spies and mock responses Lightweight spies and mock responses, and a capture/replay framework (via the Story/Replay context managers). Fuzz Testing Tools According to Wikipedia, is a software testing technique whose basic idea is to attach the inputs of a program to a source of random data ('fuzz').

If the program fails (for example, by crashing, or by failing built-in code assertions), then there are defects to correct. The great advantage of fuzz testing is that the test design is extremely simple, and free of preconceptions about system behavior. Tool Author Claim to fame Hypothesis combines unit testing and fuzz testing by letting you write tests parametrized by random data matching some specification. It then finds and minimizes examples that make your tests fail.

Ivan Moore Tests your tests by mutating source code and finding tests that don't fail! Michael Eddington Peach can fuzz just about anything from.NET, COM/ActiveX, SQL, shared libraries/DLL's, network applications, web, you name it. Dmckinney The purpose of antiparser is to provide an API that can be used to model network protocols and file formats by their composite data types. Once a model has been created, the antiparser has various methods for creating random sets of data that deviates in ways that will ideally trigger software bugs or security vulnerabilities.

Rodrigomarcos Taof is a GUI cross-platform Python generic network protocol fuzzer. It has been designed for minimizing set-up time during fuzzing sessions and it is specially useful for fast testing of proprietary or undocumented protocols. It helps to start process with a prepared environment (limit memory, environment variables, redirect stdout, etc.), start network client or server, and create mangled files. Fusil has many probes to detect program crash: watch process exit code, watch process stdout and syslog for text patterns (eg.

'segmentation fault'), watch session duration, watch cpu usage (process and system load), etc. Web Testing Tools First, let's define some categories of Web testing tools:. Browser simulation tools: simulate browsers by implementing the HTTP request/response protocol and by parsing the resulting HTML.

Browser automation tools: automate browsers by driving them for example via COM calls in the case of Internet Explorer, or XPCOM in the case of Mozilla. In-process or unit-test-type tools: call an application in the same process, instead of generating an HTTP request; so an exception in the application would go all the way up to the command runner (py.test, unittest, etc).

Tool Category Author Part of Claim to fame Browser simulation & In-process offers simple commands for navigating Web pages, posting forms and asserting conditions; can be used as shell script or Python module; can be used for; stress-test functionality; port of; uses John J. Tool Author Claim to fame Simplest GUI automation with Python on Windows. Lets you automate your computer with simple commands such as start, click and write. Uses the X11 accessability framework (AT-SPI) to drive applications so works well with the gnome desktop on Unixes. Has extensive tests for the evolution groupware client. Mark Simple Windows (NT/2K/XP) GUI automation with Python. There are tests included for Localization testing but there is no limitation to this.

Most of the code at the moment is for recovering information from Windows windows and performing actions on those controls. The idea is to have high level methods for standard controls rather then rely on Sending keystrokes to the applications. Team members OS X Cocoa accessibility based automation library for Mac Geoff Bache Domain-language based UI testing framework with a recorder.

Generates plain-text descriptions (ASCII art) of what the GUI looks like during the test, intended to be used in conjunction with (see above). Mechanism for being able to record synchronisation points. Currently has mature support for PyGTK, beta status support for SWT/Eclipse RCP and Tkinter, and an early prototype for wxPython. Swing support is being developed. Raimund Hocke (aka ) and the open-source community Python scripts and visual technology to automate and test graphical user interfaces using screenshot images (api for java available) The following tools are not currently being developed or maintained as far as we can see. They are here for completeness, with last activity date and an indication of what documentation there is. If you know better, please edit.

Tool Last Activity Docs Author Claim to fame 2013 Good Created by Redhat engineers on linux. Uses the X11 accessability framework (AT-SPI) to drive applications so works well with the gnome desktop on Unixes.

Re: Gui Failure In Test Suite For Mac Download

Has 2005 Good Dr Tim Couper Windows Application Test System Using Python - another Windows GUI automation tool. 2005 Limited Python helper library for testing Python GUI applications, with being the most mature 2005 Limited pyAA is an object oriented Python wrapper around the client-side functionality in the Microsoft Active Accessibility (MSAA) library. MSAA is a library for the Windows platform that allows client applications inspect, control, and monitor events and controls in graphical user interfaces (GUIs) and server applications to expose runtime information about their user interfaces. See the tutorial for more info. 2004 No aims to be a gui unittesting library for python; initially provided solely for, but it may be extended in the future 2003 No Simon Brunning Low-level library for Windows GUI automation used by PAMIE and WATSUP.

Source Code Checking Tools. Tool Author Claim to fame measures code coverage during Python execution; uses the code analysis tools and tracing hooks provided in the Python standard library to determine which lines are executable, and which have been executed figleaf is a Python code coverage analysis tool, built somewhat on the model of Ned Batchelder's fantastic coverage module. The goals of figleaf are to be a minimal replacement of 'coverage.py' that supports more configurable coverage gathering and reporting; figleaf is useful for situations where you are recording code coverage in multiple execution runs and/or want to tweak the reporting output Olivier Grisel HTML test coverage reporting tool with white and blacklisting support The coverage langlet weaves monitoring commands, so called sensors, into source code during global source transformation. When a statement is covered the weaved sensor responds. The coverage langlet is part of Elegant unit testing framework with built-in coverage analysis, profiling, micro-benchmarking and a powerful command-line interface. Instrumental is a Python code coverage tool that measures statement, decision, and during code execution.

Instrumental works by modifying the AST on import and adding function calls that record the circumstances under which code is executed. Continuous Integration Tools Although not properly a part of testing tools, continuous integration tools are nevertheless an important addition to a tester's arsenal.

Tool Author Claim to fame buildbot is a system to automate the compile/test cycle required by most software projects to validate code changes. By automatically rebuilding and testing the tree each time something has changed, build problems are pinpointed quickly, before other developers are inconvenienced by the failure. Bitten is a Python-based framework for collecting various software metrics via continuous integration.

It builds on to provide an integrated web-based user interface. Heinrich Wendel of SVNChecker is a framework for Subversion pre-commit hooks in order to implement checks of the to be commited files before they are commited. For example, you can check for the code style or unit tests. The output of the checks can be send by mail or be written into a file or simply print to the console. An Automated Pythonic Code Tester: designed to run tests on a code repository on a daily basis.

It comes with a set of predefined test, essentially for python packages, and a set of predefined reports to display execution results. However, it has been designed to be higly extensible, so you could write your own test or report using the Python language pony-build is a simple continuous integration package that lets you run a server to display client build results. It consists of two components, a server (which is run in some central & accessible location), and one or more clients (which must be able to contact the server via HTTP). Philosophy statement: good development tools for Python should be easy to install, easy to hack, and not overly constraining. Two out of three ain't bad;). Tox is a generic virtualenv management and test command line tool. You can use it to check that your package installs correctly with different Python versions and interpreters, to configure your test tool of choice and to act as a frontend to Continuous Integration servers.

Documentation and examples. KREM is a very lightweight automation framework.

KREM is also suitable for testing. KREM runs jobs made up of tasks executed in sequence, in parallel or a combination of both. Automatic Test Runners Tools that run tests automatically on file changes. Provides continuous feedback during development before continuous integration tools act on commits. Tool Author Claim to fame Meme Dough Monitors paths and upon detecting changes runs the specified command. The command may be any test runner.

Uses Linux inotify so it is fast with no disk churn. Doug Latornell Run the nose test discovery and execution tool whenever a source file is changed. Jeff Winkler & Jerome Lacoste A minimalist personal command line friendly CI server. Automatically runs your build whenever one of the monitored files of the monitored projects has changed. Noah Kantrowitz Continuous testing for paranoid developers. Test Fixtures. Tool Author Part of Claim to fame module for loading and referencing test data Russell Keith-Magee A test case for a database-backed website isn't much use if there isn't any data in the database.

To make it easy to put test data into the database, Django provides a fixtures framework. Chris Withers A collection of helpers and mock objects for unit tests and doc tests Fred Drake Test support composition, providing for fixture-specific APIs with unittest.TestCase. Miscellaneous Python Testing Tools. Tool Author Part of Claim to fame enables tests of Python regular expressions in a web browser; it uses SimpleHTTPServer and AJAX a wxPython GUI to the re module a QT (KDE) Regular Expression Debugger A simple environment for testing command-line applications, running commands and seeing what files they write to supports profiling, debugging and optimization regarding memory related issues in Python programs Nick Smallbone a memory usage profiler for Python code Memory profiling of Python processes, memory dumps can be viewed in Reginald B. Charney Generates metrics (e.g., LoC,%comments, etc.) for Python code. Curt Finch Analyzes cyclomatic complexity of Python code (written in Perl) test and automation framework, in python Records interactive sessions and extracts test cases when they get replayed Test combinations generator (in python) that allows to create set of tests using 'pairwise combinations' method, which reduces a number of combinations of variables into a lesser set which covers most situations. See for more info on this functional testing technique.

Model-based testing framework where the models are written in Python. Supports offline and on-the-fly testing. It uses composition for scenario control. Coverage can be guided by a programmable strategy. PythonTestingToolsTaxonomy (last edited 2018-11-25 08:38:31 by ).

Use UI automation to test your code. 19 minutes to read. Contributors. In this article Automated tests that drive your application through its user interface (UI) are known as coded UI tests (CUITs) in Visual Studio. These tests include functional testing of the UI controls.

They let you verify that the whole application, including its user interface, is functioning correctly. Coded UI Tests are particularly useful where there is validation or other logic in the user interface, for example in a web page. They are also frequently used to automate an existing manual test. Note Coded UI Test for automated UI-driven functional testing is deprecated. Visual Studio 2019 is the last version where Coded UI Test will be available. We recommend using for testing web apps and for testing desktop and UWP apps.

As shown in the following illustration, a typical development experience might be one where, initially, you simply build your application and click through the UI controls to verify that things are working correctly. Then you might decide to create an automated test so that you don't need to continue to test the application manually. Depending on the particular functionality being tested in your application, you can write code for either a functional test or for an integration test that might or might not include testing at the UI level.

If you want to directly access some business logic, you might code a unit test. However, under certain circumstances, it can be beneficial to include testing of the various UI controls in your application. A coded UI test can verify that code churn does not impact the functionality of your application. Creating a coded UI test is easy. You simply perform the test manually while Coded UI Test Builder runs in the background. You can also specify what values should appear in specific fields.

Coded UI Test Builder records your actions and generates code from them. After the test is created, you can edit it in a specialized editor that lets you modify the sequence of actions. Alternatively, if you have a test case that was recorded in Microsoft Test Manager, you can generate code from that. For more information, see. The specialized Coded UI Test Builder and editor make it easy to create and edit coded UI tests, even if your main skills are concentrated in testing rather than coding.

But if you are a developer and you want to extend the test in a more advanced way, the code is structured so that it is straightforward to copy and adapt. For example, you might record a test to buy something at a website, and then edit the generated code to add a loop that buys many items.

Requirements. Visual Studio Enterprise. Coded UI test component For more information about which platforms and configurations are supported by coded UI tests, see. Install the coded UI test component To access the coded UI test tools and templates, install the Coded UI test component of Visual Studio 2017. Launch Visual Studio Installer by choosing Tools Get Tools and Features.

In Visual Studio Installer, choose the Individual components tab, and then scroll down to the Debugging and testing section. Select the Coded UI test component.

Select Modify. Create a coded UI test. Create a Coded UI Test project. Coded UI tests must be contained in a coded UI test project. If you don't already have a coded UI test project, create one. Choose File New Project to open the New Project dialog box. In the categories pane on the left, expand Installed Visual Basic or Visual C# Test.

Select the Coded UI Test Project template, and then choose OK. Note If you don't see the Coded UI Test Project template, you need to. Add a coded UI test file.

If you just created a Coded UI project, the first CUIT file is added automatically. To add another test file, open the shortcut menu on the coded UI test project in Solution Explorer, and then choose Add Coded UI Test. In the Generate Code for Coded UI Test dialog box, choose Record actions Edit UI map or add assertions. The Coded UI Test Builder appears.

Record a sequence of actions. To start recording, choose the Record icon. Perform the actions that you want to test in your application, including starting the application if that is required. For example, if you are testing a web application, you might start a browser, navigate to the website, and log in to the application. To pause recording, for example if you have to deal with incoming mail, choose Pause.

Warning All actions performed on the desktop will be recorded. Pause the recording if you are performing actions that may lead to sensitive data being included in the recording. To delete actions that you recorded by mistake, choose Edit Steps. To generate code that will replicate your actions, choose the Generate Code icon and type a name and description for your coded UI test method. Verify the values in UI fields such as text boxes. Choose Add Assertions in the Coded UI Test Builder, and then choose a UI control in your running application. In the list of properties that appears, select a property, for example, Text in a text box.

On the shortcut menu, choose Add Assertion. In the dialog box, select the comparison operator, the comparison value, and the error message. Close the assertion window and choose Generate Code.

T-Plan Robot Enterprise (formerly known as ) is the most flexible and universal black box automation tools on the market. Developed on image based testing principles, it provides a human like approach to record and playback of user actions at the GUI level; and it performs well in situations where other tools may fail. With a long history in, controlling repeatable actions at the screen level, our software is an ideal fit for replicating the user actions of a human in the new industry of automated processing, otherwise known as or RPA. T-Plan Robot Enterprise is very versatile and functions as a , or as a. T-Plan Robot is a highly adaptable, easy to use, image based black box automation tool that creates robust automated scripts, and exercises applications in the same way as would an end user. Robot is platform independent (Java) and runs on, and automates all major systems such as Windows, Mac, Linux and Unix plus mobile platforms.

The product has the same functionality, look and feel regardless of OS. There is no need to relearn the product on various platforms, and your scripts are completely transferable between environments. Robot is unlike any tool you are likely using today. It offers you the ability to approach application automation from the end-user perspective. Nearly all automation products manipulate and check at the code object level. This source instrumentation is not possible in many new and legacy applications today. Lack of API or SDK, skill set has disappeared, bypass of UI security is forbidden.

A person doesn’t read and see code, when they are using an application they work with. Therefore, without automating your application’s interface by seeing the product, can you really confidently say that you know your application will deliver the desired experience, or automate that business process in RPA terms.

Major Benefits of T-Plan Robot Enterprise. Our Robots, as they sit at the GUI level, and operate the system as a user would, are bound by the same security restrictions and work-flows as a human being This means that there is no middle layer hacking or api coding to bypass user controls, ensuring an audited and secure business transaction is completed. Platform independence (Java). T-Plan Robot runs on, and automates all major systems, such as Windows, Mac, Linux, Unix, Solaris, and mobile platforms such as Android, iPhone, Windows CE, Windows Phone. Test ANY system.

As automation runs at the GUI level, the tool can automate most applications. Java, C/C#,.NET, HTML (web/browser), mobile, command line interfaces; also applications usually considered impossible to automate like Flash, Flex and Mobile.

Support of Java scripts as well as a proprietary scripting language. Record & Replay capability. Supports testing on Single Desktop, ADB (Android), and over the RFB protocol (better known as VNC). Non-Jailbroken (non-rooted) Apple iOS devices can be automated using our iOS libraries. Open architecture with extension interface allows easy customization and integration. Optical Character Recognition (OCR), or integration with a relational DB via JDBC.

Powerful multiple image search allows changing of window layout, button position etc. Object search & background detection to detect objects by colour, by colour range, and on different backgrounds. Radar object detection and GIS map testing. Tight integration with T-Plan Professional.

Please view the integration reference for more information. Excellent support services. T-Plan Professional was arguably the first test management tool to be sold b2b. Since T-Plan’s inception for a project at the Bank of England to dematerialise share certificates in 1989, T-Plan has continually developed the T-Plan Test Case Management Suite. The product is modular in design, clearly differentiating between the analysis, design and management of the test assets from planning to execution.

The T-Plan Test Case Management Suite is optionally sold as a “ Software-as-a-Service” ( SaaS) solution. Available as a centrally managed hosted solution, with your MSSQL data in the cloud, or application and data on-premise, we are delighted to offer our functionally rich client server application via this incredible solution. Analyze: ‘What to Test’ The first step within the Analyze phase is to gather and review the requirements from which the testing will be derived. One of the unique selling points of our tool is the best of breed Microsoft Office Integration, which facilitates the importing or exporting of data to and from e.g. Word Documents and Excel Spreadsheets (also XML etc.). This module also provides statistical reporting metrics for; testing progress, depth of coverage, risk factors and priorities.

Design: ‘How to Test’ A test specification hierarchy is created to model the test plan. This module supports full traceability, filtering, script re-usability, coverage and impact analysis, together with extensive reporting. Manage: ‘When to Test’ This phase enables the scheduling of test scripts.

Normally the test schedule will reflect the appropriate structure necessary for management reporting. This includes both the Schedule phase and the Test Execution phase. This forms the activity of planning and managing the detailed tasks to be performed once software is available to be tested.

How well are you doing? ‘What happened’ – The execution phase enables the results of executed test scripts (manual and automated) to be recorded.

Coverage and success/failure charts can be in the form of a snapshot and/or provide historical graphs highlighting progress. All reports can be exported electronically to file, email or printed directly. Reporting can be tailored your project requirement. Major Benefits of T-Plan Professional. Delivers a high level of communication between project members, and a consistent methodology for all testing processes. The familiarity of a windows explorer based product, with best of breed import and export data from Microsoft Word or Excel.

Consistent framework for testing. Having a central repository results in all test teams being completely comfortable with testing irrespective of what project they are working on, making management information far more accurate.

Enabling both technical and non-technical staff to Analyse Requirements, Design Scripts, Manage Schedules and Execute tests. Designed and built upon the. Also supports Agile Testing Methodologies.

Assign risk level to requirements and tests to drive coverage and quality. or On-Premise options available for application & database. External document referencing to provide traceability and full impact of change analysis.

Requirements storage using a structured hierarchy for requirements creation allowing you to drive quality. Flexible and fully customisable. Create new attributes, new icons, relationships and entities to improve your productivity. Expandable – enhance your usage by integrating your requirements, test automation, performance scripts, project plans into a single test management offering.

Re-use of tests. All tests can be re-used any number of times, the results can then be reported collectively or individually. Coverage and success reporting – reports on coverage and success can be produced in a number of graphical illustrations to give true management information. Fully integrated with the T-Plan Incident Manager System for bug recording, or integrate with JIRA or MS Visual Studio.

Comments are closed.

|

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed